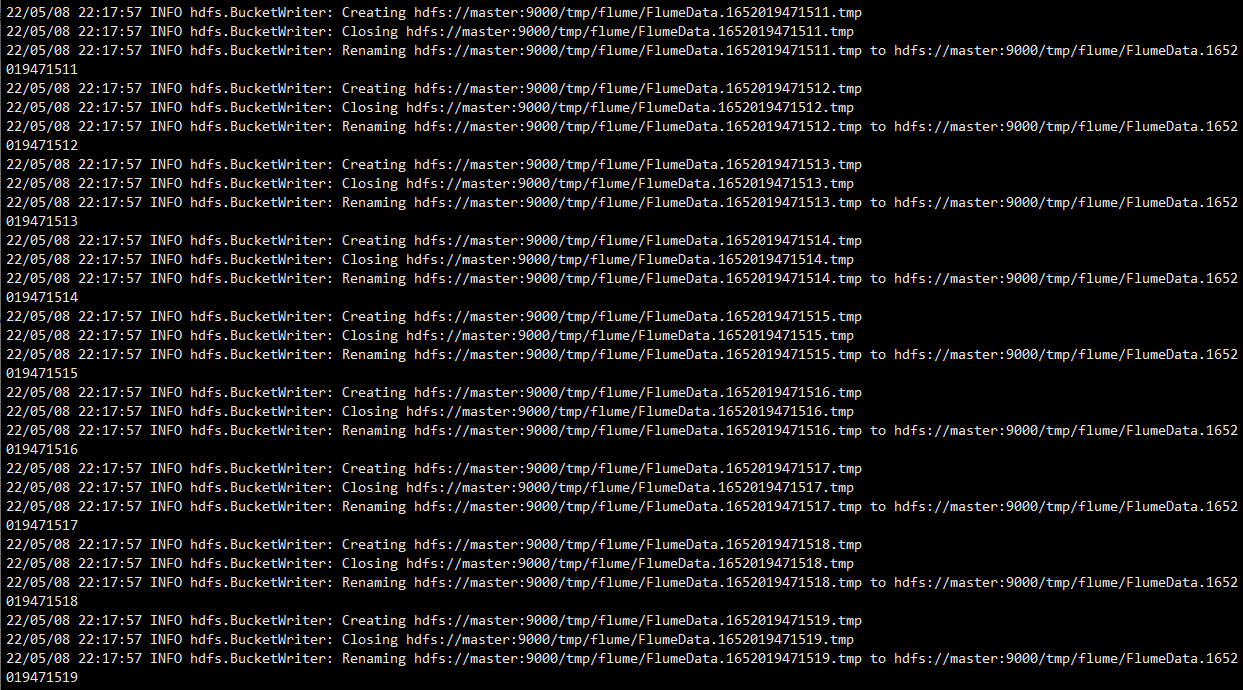

# 传Flume安装包[root@master ~]# cd /opt/software/[root@master software]# lsapache-flume-1.6.0-bin.tar.gz hadoop-2.7.1.tar.gz jdk-8u152-linux-x64.tar.gz mysql-5.7.18.zip sqoop-1.4.7.bin__hadoop-2.6.0.tar.gzapache-hive-2.0.0-bin.tar.gz hbase-1.2.1-bin.tar.gz mysql-5.7.18 mysql-connector-java-5.1.46.jar zookeeper-3.4.8.tar.gz# 使用root用户解压Flume到“/usr/local/src”路径[root@master software]# tar xf /opt/software/apache-flume-1.6.0-bin.tar.gz -C /usr/local/src/# 修改Flume安装路径文件夹名称[root@master software]# cd /usr/local/src[root@master src]# mv apache-flume-1.6.0-bin flume# 修改文件夹归属用户和归属组为hadoop用户和hadoop组[root@master src]# chown -R hadoop.hadoop /usr/local/src/# 编辑系统环境变量配置文件[root@master src]# vi /etc/profile.d/flume.sh添加:export FLUME_HOME=/usr/local/src/flumeexport PATH=${FLUME_HOME}/bin:$PATH# 切换hadoop用户[root@master src]# su - hadoop# 查看是否成功[hadoop@master ~]$ echo $PATH/usr/local/src/zookeeper/bin:/usr/local/src/sqoop/bin:/usr/local/src/hbase/bin:/usr/local/src/jdk/bin:/usr/local/src/hadoop/bin:/usr/local/src/hadoop/sbin:/usr/local/src/flume/bin:/usr/local/bin:/bin:/usr/bin:/usr/local/sbin:/usr/sbin:/usr/local/src/hive/bin:/home/hadoop/.local/bin:/home/hadoop/bin# 修改hbase-env.sh文件[hadoop@master ~]$ vim /usr/local/src/hbase/conf/hbase-env.sh#export HBASE_CLASSPATH=/usr/local/src/hadoop/etc/hadoop/ 注释掉这一行的内容# 拷贝 flume-env.sh.template 文件[hadoop@master ~]$ cd /usr/local/src/flume/conf[hadoop@master conf]$ cp flume-env.sh.template flume-env.sh# 修改并配置 flume-env.sh 文件[hadoop@master conf]$ vi flume-env.sh修改:export JAVA_HOME=/usr/local/src/jdk# 启动hadoop[hadoop@master conf]$ start-all.shThis script is Deprecated. Instead use start-dfs.sh and start-yarn.shStarting namenodes on [master]hadoop@master's password: master: namenode running as process 50448. Stop it first.192.168.100.30: datanode running as process 43460. Stop it first.192.168.100.20: datanode running as process 46094. Stop it first.Starting secondary namenodes [0.0.0.0]hadoop@0.0.0.0's password: 0.0.0.0: secondarynamenode running as process 50670. Stop it first.starting yarn daemonsresourcemanager running as process 50836. Stop it first.192.168.100.30: nodemanager running as process 43584. Stop it first.192.168.100.20: nodemanager running as process 46228. Stop it first.# 执行以上命令后要确保master上有NameNode、SecondaryNameNode、ResourceManager进程,在slave节点上要能看到DataNode、NodeManager进程# master节点查看[hadoop@master conf]$ jps50448 NameNode50836 ResourceManager47502 QuorumPeerMain50670 SecondaryNameNode55855 Jps# slave1节点查看[root@slave1 ~]# su - hadoop[hadoop@slave1 ~]$ jps2070 DataNode2364 Jps2191 NodeManager[hadoop@slave1 ~]$ # slave2节点查看[root@slave2 ~]# su - hadoop[hadoop@slave2 ~]$ jps2166 NodeManager2055 DataNode2345 Jps[hadoop@slave2 ~]$ # 验证安装是否成功[hadoop@master conf]$ flume-ng versionFlume 1.6.0Source code repository: https://git-wip-us.apache.org/repos/asf/flume.gitRevision: 2561a23240a71ba20bf288c7c2cda88f443c2080Compiled by hshreedharan on Mon May 11 11:15:44 PDT 2015From source with checksum b29e416802ce9ece3269d34233baf43f# 若能够正常查询 Flume 组件版本为1.6.0,则表示安装成功。# 在 Flume 安装目录中创建 simple-hdfs-flume.conf 文件[hadoop@master conf]$ cd /usr/local/src/flume[hadoop@master flume]$ vi simple-hdfs-flume.confa1.sources=r1a1.sinks=k1a1.channels=c1a1.sources.r1.type=spooldira1.sources.r1.spoolDir=/usr/local/src/hadoop/logsa1.sources.r1.fileHeader=truea1.sources.r1.deserializer.maxLineLength=30000a1.sinks.k1.type=hdfsa1.sinks.k1.hdfs.path=hdfs://master:9000/tmp/flumea1.sinks.k1.hdfs.rollsize=1024000a1.sinks.k1.hdfs.rollCount=0a1.sinks.k1.hdfs.rollInterval=900a1.sinks.k1.hdfs.useLocalTimeStamp=truea1.channels.c1.type=filea1.channels.c1.capacity=10000a1.channels.c1.transactionCapacity=1000a1.sources.r1.channels=c1a1.sinks.k1.channel=c1# 删除/tmp[hadoop@master flume]$ hdfs dfs -rm -r /tmp22/05/08 22:16:44 INFO fs.TrashPolicyDefault: Namenode trash configuration: Deletion interval = 0 minutes, Emptier interval = 0 minutes.Deleted /tmp# 创建/tmp/flume[hadoop@master flume]$ hdfs dfs -mkdir -p /tmp/flume# 查看文件[hadoop@master flume]$ hdfs dfs -ls /Found 5 itemsdrwxr-xr-x - hadoop supergroup 0 2022-04-29 15:26 /hbasedrwxr-xr-x - hadoop supergroup 0 2022-04-29 11:59 /inputdrwxr-xr-x - hadoop supergroup 0 2022-04-29 12:00 /outputdrwxr-xr-x - hadoop supergroup 0 2022-05-08 22:16 /tmpdrwxr-xr-x - hadoop supergroup 0 2022-04-29 16:49 /user# 使用 flume-ng agent 命令加载 simple-hdfs-flume.conf 配置信息,启动 flume 传输数据[hadoop@master flume]$ flume-ng agent --conf-file simple-hdfs-flume.conf --name a122/05/08 22:17:56 INFO hdfs.BucketWriter: Creating hdfs://master:9000/tmp/flume/FlumeData.1652019471497.tmp22/05/08 22:17:56 INFO hdfs.BucketWriter: Closing hdfs://master:9000/tmp/flume/FlumeData.1652019471497.tmp22/05/08 22:17:56 INFO hdfs.BucketWriter: Renaming hdfs://master:9000/tmp/flume/FlumeData.1652019471497.tmp to hdfs://master:9000/tmp/flume/FlumeData.165201947149722/05/08 22:17:56 INFO hdfs.BucketWriter: Creating hdfs://master:9000/tmp/flume/FlumeData.1652019471498.tmp22/05/08 22:17:56 INFO hdfs.BucketWriter: Closing hdfs://master:9000/tmp/flume/FlumeData.1652019471498.tmp22/05/08 22:17:56 INFO hdfs.BucketWriter: Renaming hdfs://master:9000/tmp/flume/FlumeData.1652019471498.tmp to hdfs://master:9000/tmp/flume/FlumeData.165201947149822/05/08 22:17:56 INFO hdfs.BucketWriter: Creating hdfs://master:9000/tmp/flume/FlumeData.1652019471499.tmp22/05/08 22:17:56 INFO hdfs.BucketWriter: Closing hdfs://master:9000/tmp/flume/FlumeData.1652019471499.tmp22/05/08 22:17:56 INFO hdfs.BucketWriter: Renaming hdfs://master:9000/tmp/flume/FlumeData.1652019471499.tmp to hdfs://master:9000/tmp/flume/FlumeData.165201947149922/05/08 22:17:56 INFO hdfs.BucketWriter: Creating hdfs://master:9000/tmp/flume/FlumeData.1652019471500.tmp22/05/08 22:17:57 INFO hdfs.BucketWriter: Closing hdfs://master:9000/tmp/flume/FlumeData.1652019471500.tmp22/05/08 22:17:57 INFO hdfs.BucketWriter: Renaming hdfs://master:9000/tmp/flume/FlumeData.1652019471500.tmp to hdfs://master:9000/tmp/flume/FlumeData.165201947150022/05/08 22:17:57 INFO hdfs.BucketWriter: Creating hdfs://master:9000/tmp/flume/FlumeData.1652019471501.tmp22/05/08 22:17:57 INFO hdfs.BucketWriter: Closing hdfs://master:9000/tmp/flume/FlumeData.1652019471501.tmp22/05/08 22:17:57 INFO hdfs.BucketWriter: Renaming hdfs://master:9000/tmp/flume/FlumeData.1652019471501.tmp to hdfs://master:9000/tmp/flume/FlumeData.165201947150122/05/08 22:17:57 INFO hdfs.BucketWriter: Creating hdfs://master:9000/tmp/flume/FlumeData.1652019471502.tmp22/05/08 22:17:57 INFO hdfs.BucketWriter: Closing hdfs://master:9000/tmp/flume/FlumeData.1652019471502.tmp22/05/08 22:17:57 INFO hdfs.BucketWriter: Renaming hdfs://master:9000/tmp/flume/FlumeData.1652019471502.tmp to hdfs://master:9000/tmp/flume/FlumeData.165201947150222/05/08 22:17:57 INFO hdfs.BucketWriter: Creating hdfs://master:9000/tmp/flume/FlumeData.1652019471503.tmp22/05/08 22:17:57 INFO hdfs.BucketWriter: Closing hdfs://master:9000/tmp/flume/FlumeData.1652019471503.tmp22/05/08 22:17:57 INFO hdfs.BucketWriter: Renaming hdfs://master:9000/tmp/flume/FlumeData.1652019471503.tmp to hdfs://master:9000/tmp/flume/FlumeData.1652019471503# 查看 Flume 传输到 HDFS 的文件[hadoop@master flume]$ hdfs dfs -ls /tmp/flume-rw-r--r-- 2 hadoop supergroup 1329 2022-05-08 22:17 /tmp/flume/FlumeData.1652019471536-rw-r--r-- 2 hadoop supergroup 1479 2022-05-08 22:17 /tmp/flume/FlumeData.1652019471537-rw-r--r-- 2 hadoop supergroup 1360 2022-05-08 22:17 /tmp/flume/FlumeData.1652019471538-rw-r--r-- 2 hadoop supergroup 1249 2022-05-08 22:17 /tmp/flume/FlumeData.1652019471539-rw-r--r-- 2 hadoop supergroup 1349 2022-05-08 22:17 /tmp/flume/FlumeData.1652019471540-rw-r--r-- 2 hadoop supergroup 1550 2022-05-08 22:17 /tmp/flume/FlumeData.1652019471541-rw-r--r-- 2 hadoop supergroup 1241 2022-05-08 22:17 /tmp/flume/FlumeData.1652019471542-rw-r--r-- 2 hadoop supergroup 1372 2022-05-08 22:17 /tmp/flume/FlumeData.1652019471543-rw-r--r-- 2 hadoop supergroup 1362 2022-05-08 22:17 /tmp/flume/FlumeData.1652019471544-rw-r--r-- 2 hadoop supergroup 1485 2022-05-08 22:17 /tmp/flume/FlumeData.1652019471545-rw-r--r-- 2 hadoop supergroup 17253 2022-05-08 22:17 /tmp/flume/FlumeData.1652019471546-rw-r--r-- 2 hadoop supergroup 1296 2022-05-08 22:17 /tmp/flume/FlumeData.1652019471547-rw-r--r-- 2 hadoop supergroup 1285 2022-05-08 22:17 /tmp/flume/FlumeData.1652019471548-rw-r--r-- 2 hadoop supergroup 1447 2022-05-08 22:17 /tmp/flume/FlumeData.1652019471549-rw-r--r-- 2 hadoop supergroup 1363 2022-05-08 22:17 /tmp/flume/FlumeData.1652019471550-rw-r--r-- 2 hadoop supergroup 1246 2022-05-08 22:17 /tmp/flume/FlumeData.1652019471551-rw-r--r-- 2 hadoop supergroup 1366 2022-05-08 22:17 /tmp/flume/FlumeData.1652019471552-rw-r--r-- 2 hadoop supergroup 1630 2022-05-08 22:17 /tmp/flume/FlumeData.1652019471553-rw-r--r-- 2 hadoop supergroup 1250 2022-05-08 22:17 /tmp/flume/FlumeData.1652019471554-rw-r--r-- 2 hadoop supergroup 1425 2022-05-08 22:17 /tmp/flume/FlumeData.1652019471555-rw-r--r-- 2 hadoop supergroup 1329 2022-05-08 22:17 /tmp/flume/FlumeData.1652019471556……# 若能查看到 HDFS 上/tmp/flume 目录有传输的数据文件,则表示数据传输成功。使用 flume-ng agent 命令加载 simple-hdfs-flume.conf 配置信息

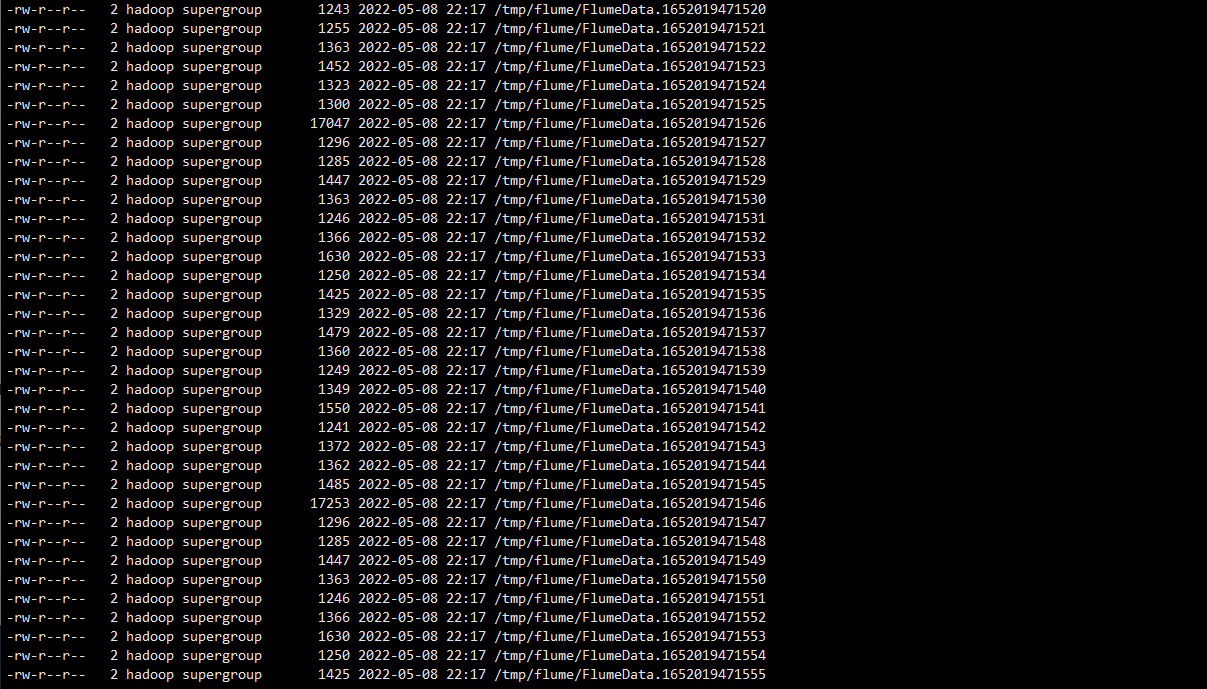

查看 Flume 传输到 HDFS 的文件:

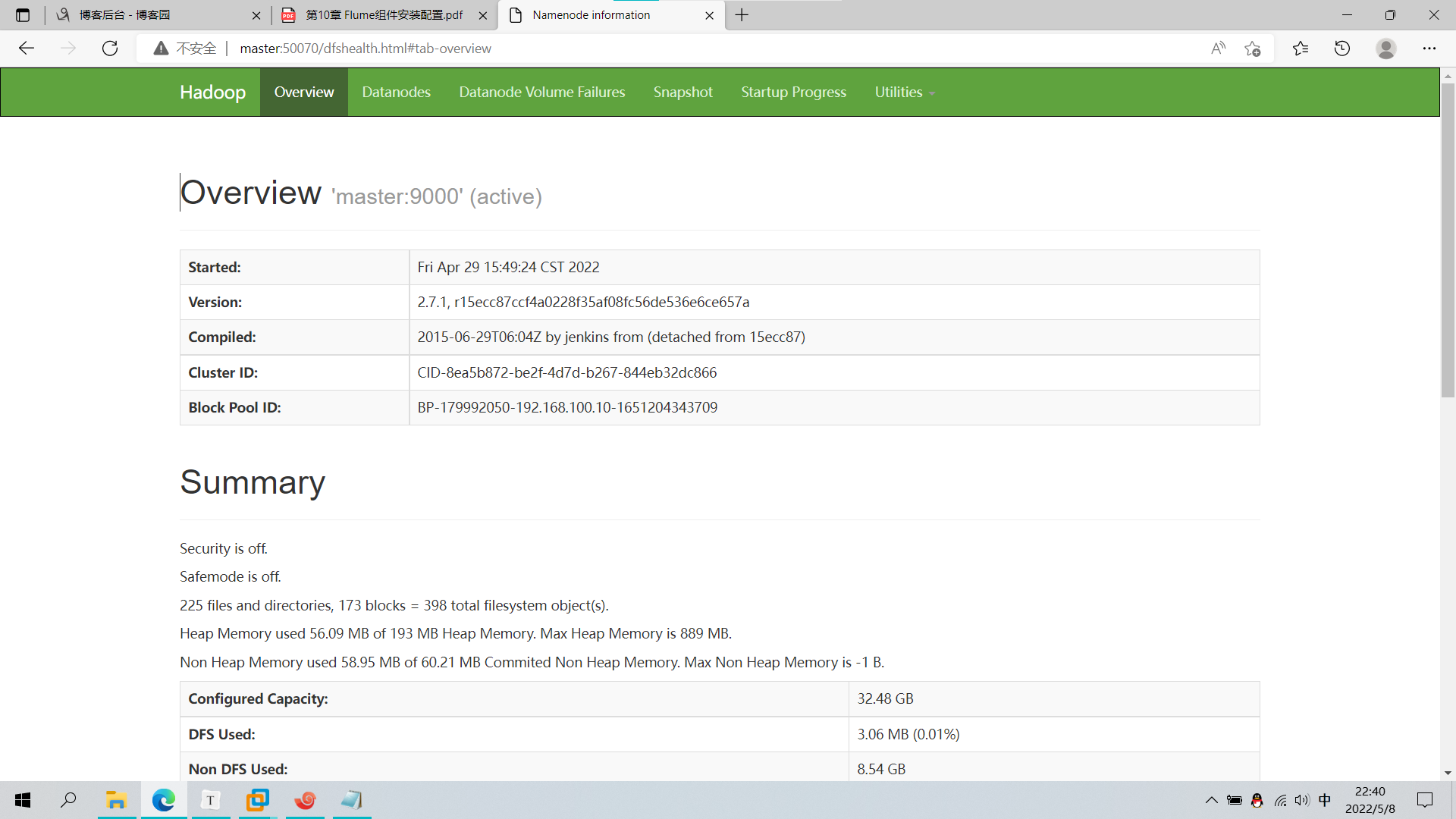

浏览器查看:http://master:50070

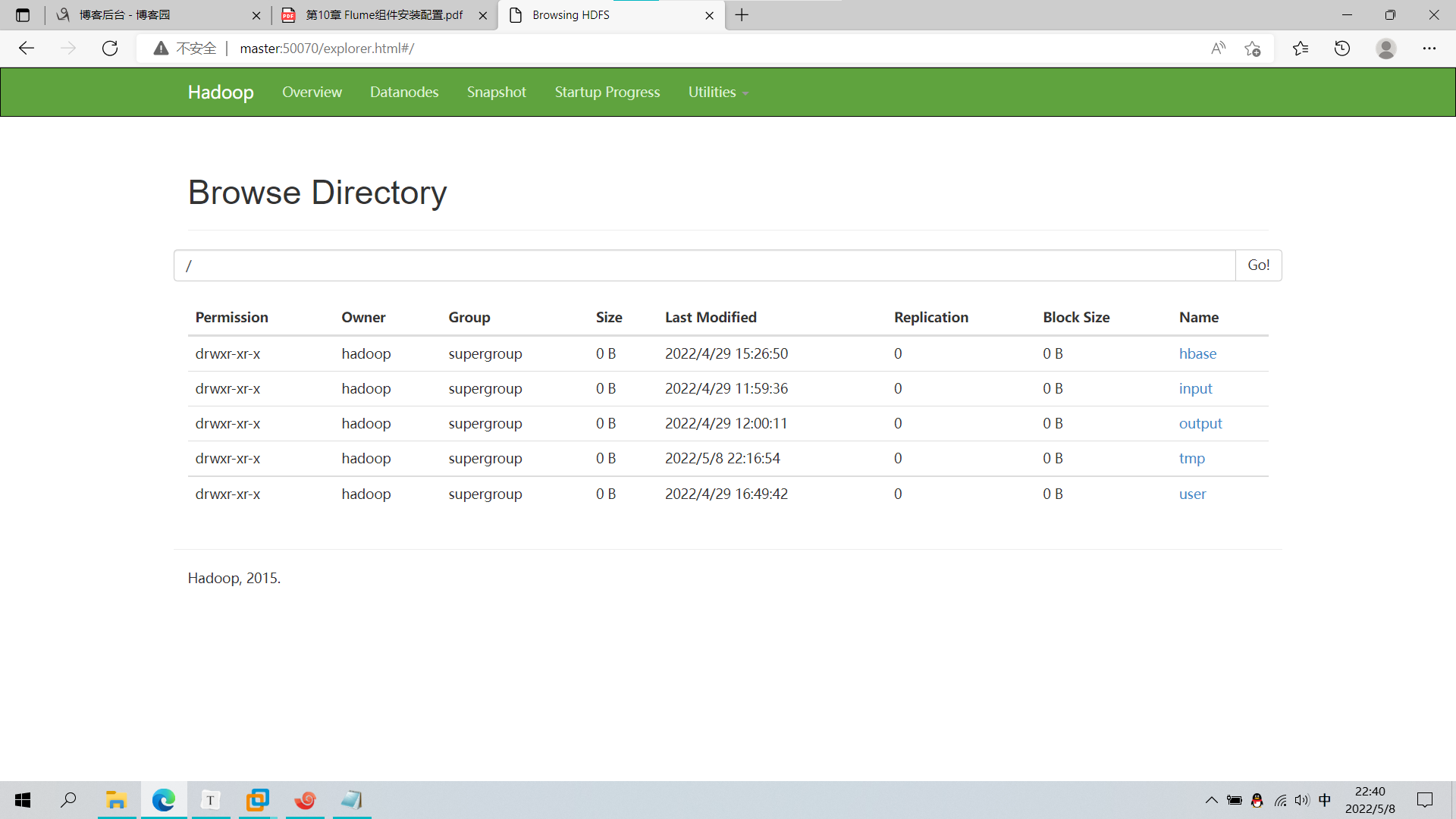

查看文件:

声明:未经许可,不得转载